Compression of NLP-Domain Deep Learning Models

About the Project

The field of model compression has enjoyed many advancements in recent years, yet few reliable methods have been developed specifically for the natural language processing (NLP) domain. In this project, we showcase a survey on model compression techniques and implement custom compression methods on an emotion classification task.

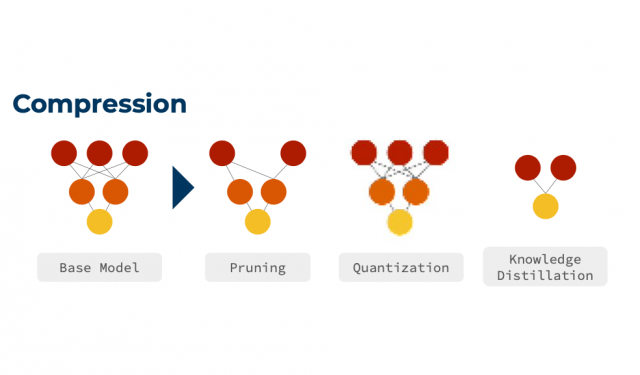

Compression Example

Student Team

- Jorge Murillo

- Lawrence Su

- Nathan Roll

- Yangyi Zhang

- Yan Lashchev

Mentors

- Robert Bernard, Sponsor

- Erika McPhillips, TA

Presentation

Capstone Showcase Poster250.85 KB

About PwC

PwC(PricewaterhouseCoopers) is an accounting firm that deals primarily with tax consulting and tax planning. In terms of machine learning, they are focused mainly on OCR (optical character recognition) and NLP-related machine learning tasks.